Resistor Reliability Report: Specs, Types & Metrics

2026-05-10 10:15:22

0

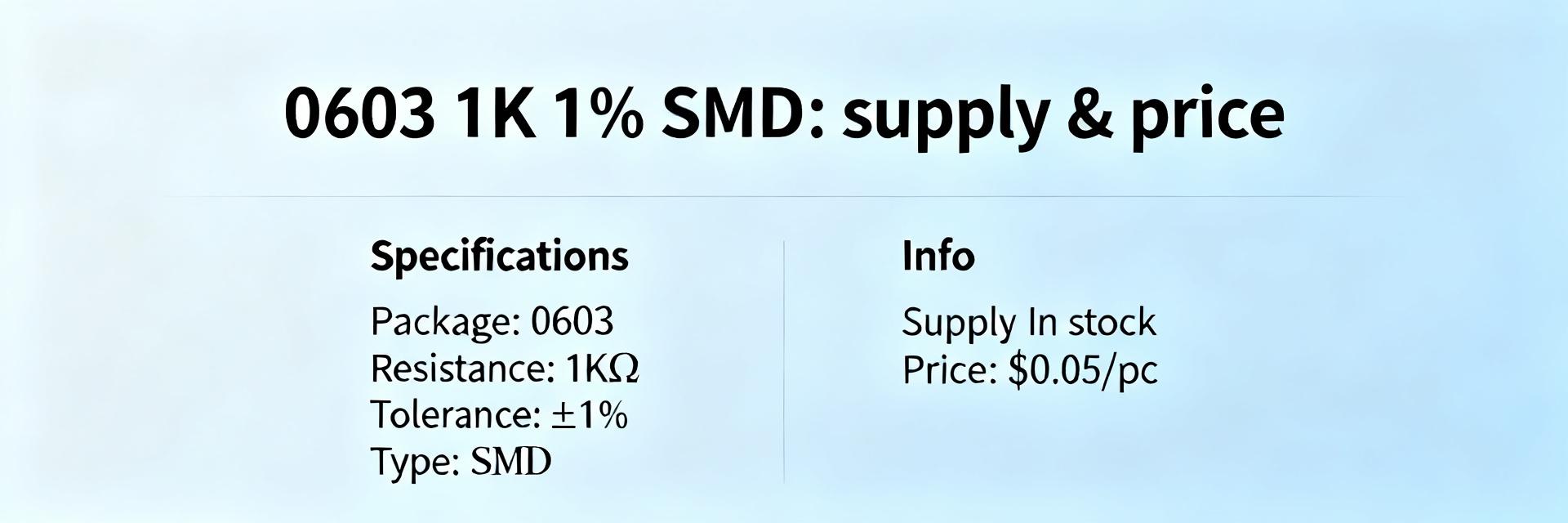

Introduction — Point: Recent lab and field analyses indicate resistors account for a notable share of passive-component failures in harsh environments, affecting uptime and safety. Evidence: Aggregated reliability studies and accelerated life testing summaries show elevated drift, opens, and solder-joint issues under combined thermal and humid stress. Explanation: This report condenses actionable metrics, spec-reading guidance, and selection rules so engineers and purchasers can reduce field returns and safety risks. Introduction — Point: The goal is practical: translate test outputs and datasheet entries into margined designs. Evidence: Cross-study comparisons and procurement case histories reveal simple derating and required ALT evidence lower in-field failure rates. Explanation: Readers will gain stepwise checks, calculation templates, and a compact checklist to improve service life without speculative multipliers. 1 Why resistor reliability matters (background) 1.1 Key failure modes and system impact Point: Resistor failures present as open circuits, shorts, resistance drift, tolerance shifts, thermal runaway, and mechanical fracture. Evidence: Field returns and board-level fault analyses commonly attribute signal degradation, power loss, and safety trips to these modes. Explanation: Understanding dominant modes by application class—precision analog, power, pulsed—lets teams specify acceptable failure consequences and mitigation (redundancy, derating, monitoring) early in the design. 1.2 Relevant specs that predict reliability Point: Datasheet items that most closely correlate with long life are rated power, maximum working voltage, TCR, tolerance, derating curves, thermal resistance, and moisture sensitivity indicators. Evidence: Comparing stressed-population ALTs with nominal ratings shows margin directly affects drift and open-fail incidence. Explanation: Use power stress = applied power / rated power and consult derating curves to set design margins; request explicit resistor specs for thermal and humidity limits during procurement. 2 Resistor reliability: field & lab data deep-dive 2.1 Failure-rate benchmarks and comparative datasets Point: Commonly reported metrics include MTTF and FIT and distributions of failure modes by environment. Evidence: Published reliability handbooks and aggregated ALT summaries recommend presenting ranges (e.g., orders of magnitude) rather than single-point rates to avoid overconfidence. Explanation: Report FIT ranges per stress category and present a comparison table by temperature, humidity, and vibration to inform component selection and acceptance criteria. 2.2 Environmental stress effects: temp, humidity, vibration, and surge Point: Each stressor accelerates different failure physics: humidity promotes corrosion and drift; thermal cycling drives solder and bond fatigue; vibration causes mechanical fracture; surge and pulses induce overheating or substrate damage. Evidence: Correlated ALT logs show mode-specific clusters under targeted stress profiles. Explanation: Design ALTs to isolate stresses, then map observed modes to field monitors for predictive maintenance planning. 3 Metrics and calculations every designer should master 3.1 MTTF, FIT, and ALT Basics Point: MTTF and FIT quantify expected failure frequency; ALT bridges accelerated conditions to field life. Evidence: A valid workflow defines failure criteria, captures time-to-failure distributions, and uses conservative extrapolation assumptions. Explanation: Use a checklist—representative stress profile, adequate sample size, run-in, clear failure definitions, and documented logging—to ensure ALT outputs are trustworthy for life estimates. 3.2 Derating & Thermal Power Point: Power-stress calculation and derating are the most direct reliability levers. Evidence: Extract thermal resistance, rated power, and derating curves from resistor specs to compute junction/ambient delta and applied fraction of rating. Explanation: Calculate power stress = (I^2·R)/rated power, apply required margin per application class, and verify using thermal resistance and PCB thermal design to avoid repetitive thermal excursions. 4 Resistor types and their reliability profiles 4.1 Common resistor technologies Point: Different constructions yield distinct reliability trade-offs. Evidence: Comparative data and failure-mode studies show wirewound excels in pulse and power, metal-oxide resists thermal drift, thin-film offers low TCR for precision, and carbon shows higher noise and humidity sensitivity. Type Strengths Weaknesses Thin/metal film Low TCR, precision Lower pulse capacity Wirewound High power, pulse Inductive, size Metal-oxide Thermal stability Moderate noise Carbon Low cost Humidity sensitivity, drift 4.2 Specialized resistors: high-power, precision, pulse, and high-voltage Point: Specialized parts use substrates, metallization, and packaging to extend life in niches. Evidence: Life-test summaries for high-power and high-voltage variants show improved survival when matched to intended stressors and derated appropriately. Explanation: Choose specialized resistors when standard parts cannot meet derating or pulse requirements; require manufacturer ALT summaries and batch traceability during procurement. 5 Testing, qualification, and standards 5.1 Recommended test protocols and ALT design Point: Effective ALT setups include thermal cycling, power cycling, humidity with bias, surge/pulse testing, and mechanical shock/vibration. Evidence: Protocols that specify sample size, run-in, and objective failure criteria produce reproducible data for acceptance decisions. Explanation: Document ALTs with clear data-logging, failure analysis plans, and statistically supported sample counts to translate results into procurement acceptance limits. 5.2 Standards and handbook references to cite Point: Standards provide test-method templates and threshold guidance for acceptance. Evidence: Industry and military reliability handbooks list stress profiles, test fixtures, and mapping guidelines for component-level qualification. Explanation: Reference standard parameter thresholds when defining resistor specs required in POs—include derating, maximum working voltage, and humidity test levels as explicit contractual items. 6 Practical checklist 6.1 Spec-sheet and sourcing checklist for purchasers and engineers Point: A concise procurement checklist reduces ambiguity and failure risk. Evidence: Best practice lists include required derating, TCR limits, tolerance, power and surge ratings, environmental qualification, ALT evidence, and lot traceability. Explanation: Include explicit PO clauses requesting batch ALT summaries, life-test evidence, and return-material authorization terms to align supplier deliverables with design assumptions. 6.2 Design and assembly best practices to reduce failures Point: PCB and assembly decisions materially affect resistor life. Evidence: Thermal vias, generous copper pour for heat dissipation, correct solder profiles for SMDs, and controlled handling reduce thermal and mechanical stress-related failures. Explanation: Specify reflow profiles, recommend conformal coating for humid environments, and instrument field units to log temperature and event counters for condition-based maintenance. Summary Prioritize datasheet-derived margins: use resistor specs to set derating and thermal budgets; require ALT evidence during sourcing to validate longevity under intended stresses, reinforcing better resistor reliability. Match resistor types to application stresses: choose thin- or metal-film for precision, wirewound for power/pulse, and ceramic-substrate for harsh environments to reduce mode-specific failures. Adopt standard ALT protocols and procurement clauses: specify test profiles, sample sizes, and failure criteria so design margins are backed by measurable life estimates and traceable supplier data. FAQ How should engineers use resistor specs to predict reliability? Use point-estimates from datasheets—rated power, derating curve, TCR, thermal resistance—to compute power stress and junction rise. Require supplier ALT summaries that mirror your stress profile and apply conservative margins; incorporate these numbers into acceptance criteria and preventive-replacement schedules. Which resistor types are best for high-power, high-reliability applications? Wirewound and ceramic-substrate high-power variants generally offer superior pulse handling and thermal robustness. For precision power applications, select parts with documented surge ratings and low TCR; always confirm with ALT evidence under representative application loading. What minimal ALT evidence should procurement request for critical resistors? Request a concise ALT summary showing test conditions, sample size, failure criteria, time-to-failure distribution, and corrective-action notes. Include batch traceability and a statement that test stresses reflect expected field temperature, humidity, and power profiles. Technical Reliability Analysis © 2023 Resistor Industry Report

READ MORE